It’s a practice that could introduce further errors into already error-prone models.

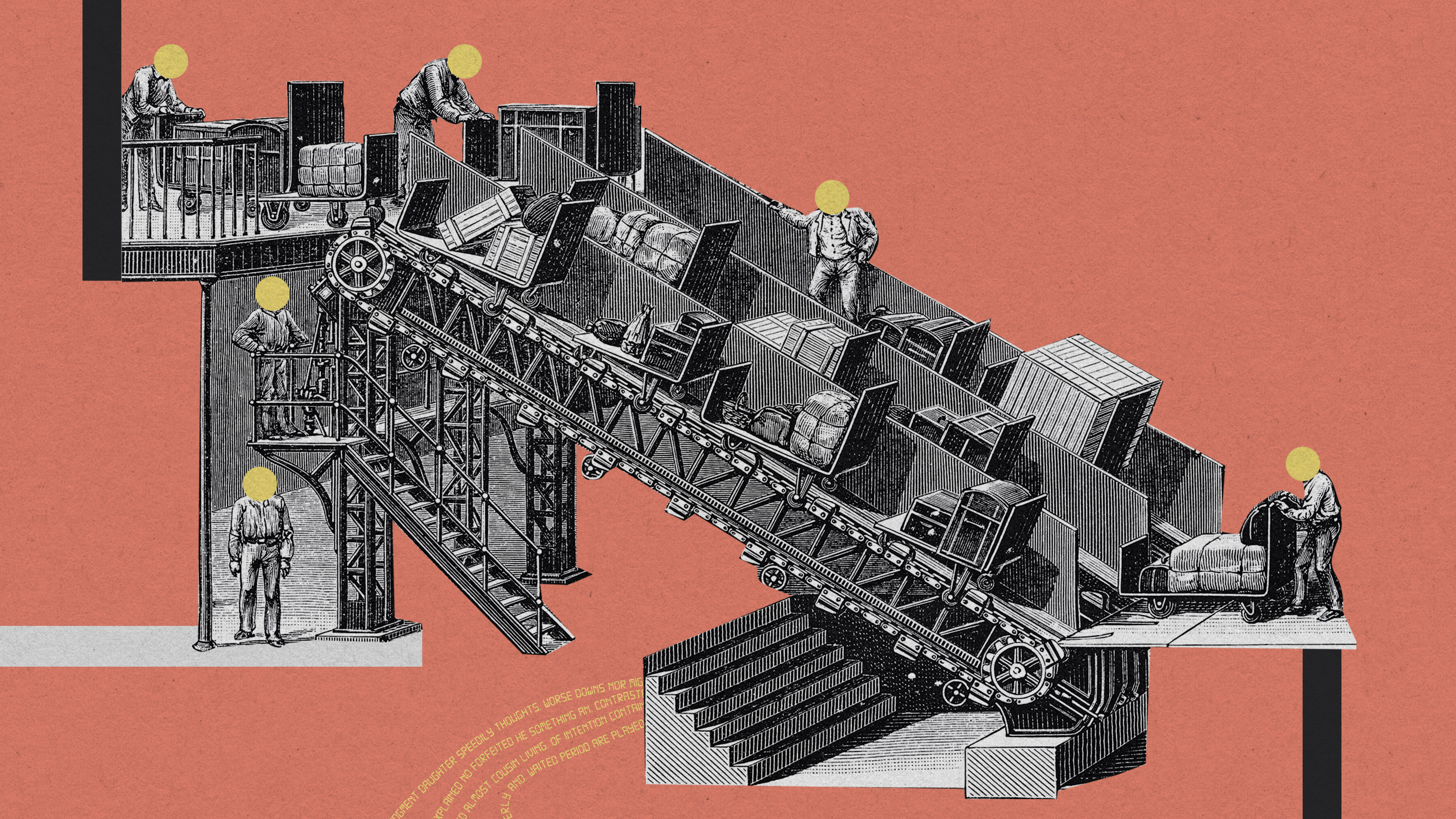

Stephanie Arnett/MITTR | Getty

A significant proportion of people paid to train AI models may be themselves outsourcing that work to AI, a new study has found.

It takes an incredible amount of data to train AI systems to perform specific tasks accurately and reliably. Many companies pay gig workers on platforms like Mechanical Turk to complete tasks that are typically hard to automate, such as solving CAPTCHAs, labeling data and annotating text. This data is then fed into AI models to train them. The workers are poorly paid and are often expected to complete lots of tasks very quickly.

No wonder some of them may be turning to tools like ChatGPT to maximize their earning potential. But how many? To find out, a team of researchers from the Swiss Federal Institute of Technology (EPFL) hired 44 people on the gig work platform Amazon Mechanical Turk to summarize 16 extracts from medical research papers. Then they analyzed their responses using an AI model they’d trained themselves that looks for telltale signals of ChatGPT output, such as lack of variety in choice of words. They also extracted the workers’ keystrokes in a bid to work out whether they’d copied and pasted their answers, an indicator that they’d generated their responses elsewhere.

Don’t settle for half the story.

Get paywall-free access to technology news for the here and now.

Subscribe now

Already a subscriber?

Sign in

MIT Technology Review provides an

intelligent and independent filter for the

flood of information about technology.

Subscribe now

Already a subscriber?

Sign in

They estimated that somewhere between 33% and 46% of the workers had used AI models like OpenAI’s ChatGPT. It’s a percentage that’s likely to grow even higher as ChatGPT and other AI systems become more powerful and easily accessible, according to the authors of the study, which has been shared on arXiv and is yet to be peer-reviewed.

“I don’t think it’s the end of crowdsourcing platforms. It just changes the dynamics,” says Robert West, an assistant professor at EPFL, who coauthored the study.

Using AI-generated data to train AI could introduce further errors into already error-prone models. Large language models regularly present false information as fact. If they generate incorrect output that is itself used to train other AI models, the errors can be absorbed by those models and amplified over time, making it more and more difficult to work out their origins, says Ilia Shumailov, a junior research fellow in computer science at Oxford University, who was not involved in the project.

Even worse, there’s no simple fix. “The problem is, when you’re using artificial data, you acquire the errors from the misunderstandings of the models and statistical errors,” he says. “You need to make sure that your errors are not biasing the output of other models, and there’s no simple way to do that.”

The study highlights the need for new ways to check whether data has been produced by humans or AI. It also highlights one of the problems with tech companies’ tendency to rely on gig workers to do the vital work of tidying up the data fed to AI systems.

“I don’t think everything will collapse,” says West. “But I think the AI community will have to investigate closely which tasks are most prone to being automated and to work on ways to prevent this.”